Web performance analysis of top 10 supermarket websites in Denmark

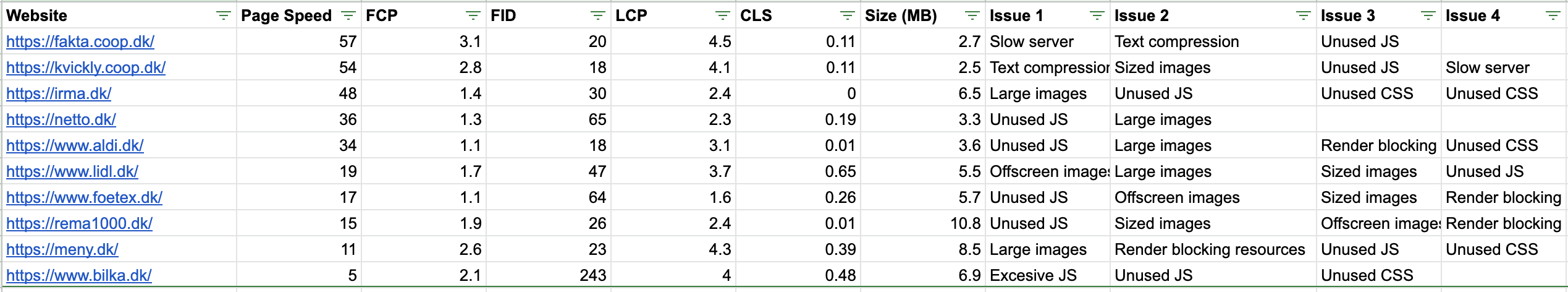

I have analyzed mobile website performance of the top 10 supermarkets in Denmark. My goal has been to assess the state of mobile web performance in a less explored niche that is still visited by many people every day. I have summarised common performance bottlenecks across websites and identified top performance improvement opportunities for each website. I have divided analysis into overall technical comparison based on PageSpeed field data and my unique observations. Data has been collected on 8th of July, 2020.

Overall

Overall performance is not great on all websites. Only two websites are above 50 points in the Page Speed index based on field data. In my eyes, this represents a great opportunity to gain competitive advantage in Google Search rankings by improving mobile website performance. Not to mention better end user experience on mobile devices.

Looking at the data across all websites, performance improvement opportunities can be summarised in two points:

- Load JS libraries on-demand to reduce unused JavaScript code

- Serve lower resolution images in WebP format to mobile devices

Analysis of each mobile website

Fakta

Fakta manages to jump to the top of the list even with a terribly slow Umbraco backend. It takes a whooping 1.5 seconds for the backend to respond to the landing page request. Server also does not use text compression which results in more bytes being sent over the wire. Both issues could be solved by generating a static HTML version of the landing page and serving it with a minimal web server or even CDN. In the end, mobile website user experience is saved by a small cumulative layout shift, low first input delay and small total payload size.

Kvickly

Unsurprisingly, Kvickly comes second as it belongs to the same COOP group as Fakta and is built using the same technology stack.

Irma

Someone at Irma really loves strawberries. They are serving a lot of them - 4.5 megabytes to be precise. This single image makes up more than a half of the mobile website size. A regression like this could have been caught with a continuous web performance monitoring service.

Netto

Netto could improve by focusing on unused JS and optimizing image size. Nothing in particular caught my eye, deeper analysis is required to assess the structure of JS libraries and their purpose. Image size optimization is the cheapest technically and would give the biggest performance boost.

Aldi

Aldi is importing a large Facebook Connect library that is blocking rendering and has one completely unused CSS file. Otherwise, common issues of large JS and image payloads.

Lidl

Lidl mobile website is suffering from content shiftenitis. Website could be improved by delay loading offscreen images, using WebP image format, serving different resolution images based on device screen size and setting expected image sizes in HTML/CSS to avoid large layout shifts.

Føtex

Føtex is adding 3.5 seconds to mobile website loading time with Zendesk JS library. Support button could be easily changed to trigger on-demand loading of Zendesk scripts when it is clicked. Offscreen image loading could also be easily deferred with the lazy loading attribute.

Rema1000

Rema1000 is a very bloated website. Full of JS library and large image files. Rema1000 is the only mobile website which crossed the 10 megabyte payload mark. Further analysis is required to determine the purpose of JS libraries and what is actually needed for the initial load.

Meny

Meny has many of the same problems already listed: large images, rendering blocking resources and unused JS.

Bilka

Bilka’s mobile website has a lot of JS executing on initial page load. This causes a large first input delay. One noticeable script is chat SDK and guessing on it’s name, could be easily loaded on demand when needed.

Summary

Overall mobile performance and user experience of supermarket websites in Denmark are not great. At the same time, websites are not hopeless and suffer from a common set of performance issues that can be addressed even with a small engineering budget. Delaying clearly unnecessary third-party scripts until it’s needed and creating an image optimization pipeline are quite well understood problems.